In the rapidly evolving landscape of 2026, the baseline for artificial intelligence has shifted. It is no longer enough to simply "have an LLM." The real competitive advantage lies in how well that model understands your specific business logic, your industry’s safety requirements, and your customers' unique needs.

At AquSag Technologies, we speak with CTOs daily who are moving past the "out of the box" phase of AI. They are looking to build proprietary intelligence. However, the path to a high-performing, domain-specific model is paved with complex technical choices. The most critical among these is Alignment.

How do you take a raw model and ensure it follows instructions, remains helpful, and stays within the "guardrails" of your organization? Today, two heavyweights dominate this conversation: Reinforcement Learning from Human Feedback (RLHF) and Direct Preference Optimization (DPO).

Why Alignment is the Secret Sauce of Enterprise AI

A raw Large Language Model is essentially a massive statistical engine. It predicts the next word based on patterns found in trillions of pages of text. While powerful, this "pre-trained" state often lacks a moral compass or a specific professional tone.

Alignment is the process of fine-tuning the model’s behavior to match human values and specific business objectives. Without proper alignment, a model might provide technically correct but dangerous advice, or use a tone that is completely inappropriate for your brand.

Alignment isn't just about safety; it is about utility. A model that understands the nuances of your industry is a model that generates ROI.

The Current State of the Industry

As we move deeper into 2026, we are seeing a trend toward smaller, highly specialized models rather than massive, general-purpose ones. This shift makes the choice between RLHF and DPO even more vital. Whether you are building Autonomous Agentic AI Workflows or a specialized medical assistant, the alignment method you choose dictates the stability and cost of your final product.

Understanding RLHF: The Gold Standard for Complex Reasoning

Reinforcement Learning from Human Feedback, or RLHF, has been the backbone of the most famous models on the market. It is a sophisticated, multi-stage process that allows a model to learn from human intuition.

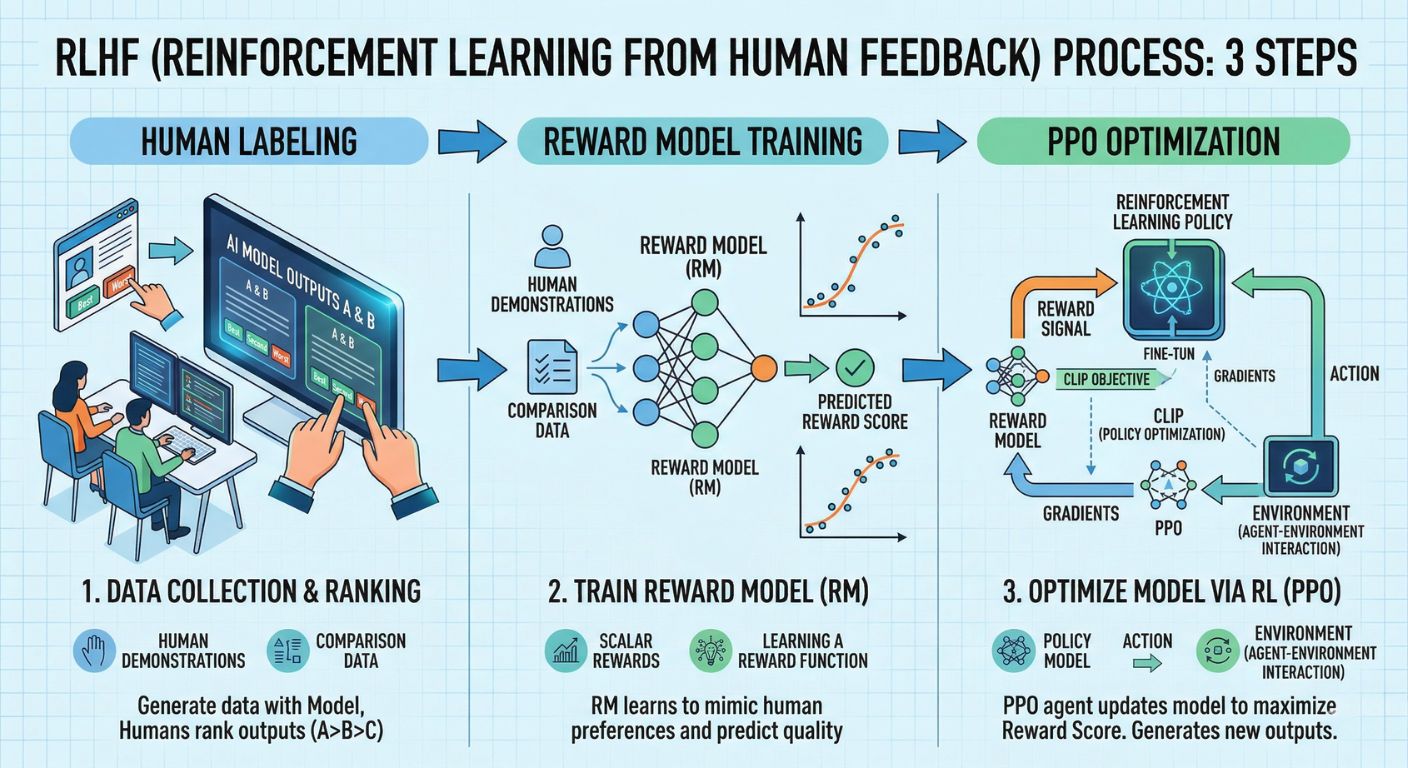

How the RLHF Pipeline Works

- Supervised Fine-Tuning (SFT): We start by showing the model high-quality examples of how a human would answer specific questions.

- Reward Model Training: This is the clever part. Humans rank several different model responses from best to worst. We then train a second, smaller model (the Reward Model) to predict those human preferences.

- Reinforcement Learning (PPO): The main LLM is then trained using a technique called Proximal Policy Optimization. It "plays a game" where it tries to get the highest score possible from the Reward Model.

The Strengths of RLHF

RLHF is exceptionally good at "reasoning." Because it uses a separate Reward Model, it can capture subtle nuances that simpler methods might miss. This is why it is our preferred choice at AquSag when building systems that require AI Red-Teaming and Governance, where the model must navigate complex ethical and safety boundaries.

Direct Preference Optimization (DPO): The Modern, Efficient Challenger

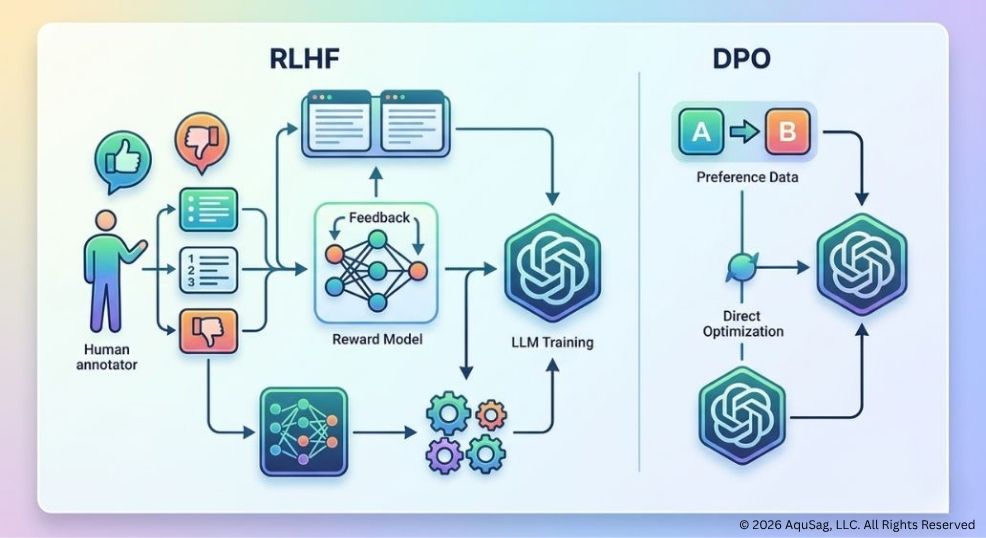

While RLHF is powerful, it is also notoriously difficult to implement. It requires managing multiple models simultaneously and is prone to "instability" during training. This is where Direct Preference Optimization (DPO) comes in.

DPO is a newer approach that simplifies the alignment process. Instead of training a separate Reward Model and using complex reinforcement learning, DPO treats alignment as a simple classification problem.

Why DPO is Gaining Traction in 2026

- Simplicity: You don't need a Reward Model or a PPO loop. You simply show the model pairs of "preferred" and "rejected" answers, and the model learns to shift its probability toward the better one.

- Stability: DPO is much less likely to "crash" or produce weird, nonsensical outputs during the training phase.

- Cost-Effectiveness: Because it requires less compute power and fewer moving parts, DPO is a cornerstone of Cost-Efficient AI Scaling strategies for startups and mid-market firms.

RLHF vs. DPO: The Comparison Every CTO Needs

Choosing between these two isn't about which one is "better" in a vacuum. It is about which one fits your specific engineering goals and budget.

| Feature | RLHF | DPO |

| Complexity | High (Multiple models) | Low (Single model) |

| Compute Cost | High | Low to Moderate |

| Reasoning Depth | Superior for complex tasks | Excellent for style and tone |

| Stability | Fragile / Hard to tune | Very Stable |

| Data Requirement | Massive human ranking data | Paired preference data |

At AquSag, we often advise our clients to look at their data. If you have a highly specialized domain, like legal or deep tech, where "nuance" is everything, the investment in RLHF is usually worth it. However, if you are looking to refine the tone of your Signal-Based Selling Automation tools, DPO will get you there faster and cheaper.

Building a Domain-Specific LLM: The AquSag Way

We don't believe in a "one size fits all" approach to AI. As a Technical Infrastructure Partner, our role is to ensure that the engineering behind your model is as stable as it is innovative.

Step 1: Data Curation and "Clean Rooms"

Alignment is only as good as the data you feed it. We help organizations build secure data pipelines. Before we even touch RLHF or DPO, we ensure your data is decentralized and secure using a Data Mesh Architecture. This prevents "data poisoning" and ensures your model learns from the best possible sources.

Step 2: The Alignment Choice

We evaluate your specific use case. Are you building a system that needs to handle high-stakes financial data? We might lean toward RLHF. Are you modernizing your customer service by Refactoring Legacy Code to API-First Microservices? DPO might be the more efficient path to integrating a helpful AI assistant.

Step 3: Deployment and Monitoring

A model is a living thing. Even after alignment, we deploy Managed Engineering Pods to monitor your model’s performance in the real world. This ensures that as the market shifts, your model stays aligned with your business goals.

The Risks of Poor Alignment

In the world of enterprise software, a "hallucination" isn't just a funny mistake; it is a liability.

We have seen companies try to skip the alignment phase, relying instead on "prompt engineering." This is a temporary fix. For true Stability as a Service, the intelligence must be baked into the weights of the model itself. Poorly aligned models can leak sensitive data, ignore safety protocols, or simply provide a poor user experience that damages your brand.

This is why Green IT Audits and Carbon-Aware Computing are becoming so important. If you have to retrain your model five times because the alignment failed, you aren't just wasting money; you are creating a massive carbon footprint. Getting the alignment right the first time is both a business and an ethical necessity.

The Future: Hybrid Alignment and Continuous Learning

As we look toward the end of 2026, the "RLHF vs. DPO" debate may evolve into a hybrid model. We are already experimenting with workflows that use DPO for quick style adjustments and RLHF for deep reasoning tasks.

The goal is always the same: building a technical bench that is capable of handling the most complex challenges in software engineering today. Whether that involves building Autonomous Agentic AI Workflows or fine-tuning a niche model for a specific vertical, the focus must remain on engineering integrity.

Why Choose AquSag Technologies as Your Engineering Partner?

The shift toward specialized AI requires a different kind of partner. You don't just need "coders"; you need architects who understand the mathematical foundations of AI alignment.

At AquSag, we provide the specialized technical infrastructure and managed teams required to bring these complex models to life. We act as a bridge between the cutting edge of AI research and the practical realities of enterprise delivery.

Our Specialized AI Engineering Services:

- Custom RLHF and DPO implementation pipelines.

- Domain-specific model fine-tuning and evaluation.

- Secure AI infrastructure and AI Red-Teaming and Governance.

- Managed "pods" of AI and ML engineers on our internal payroll.

- Strategic consulting for Cost-Efficient AI Scaling.

Stop Guessing. Start Engineering.

The difference between a "prototype" and a "production-ready" AI model is the quality of its alignment. If your organization is ready to build a proprietary intelligence that actually drives results, you need an engineering partner that prioritizes stability, security, and ROI.

Don't let your AI strategy be a black box. Let's build a model that understands your business as well as you do.

Contact AquSag Technologies for Expert AI Model Alignment

Are you ready to scale your engineering output with a partner that truly understands the technical infrastructure of the future? Whether you need to fine-tune a domain-specific model or build a complex multi-agent system, our team is ready to deliver.